Program Evaluation

Tamrack

Business Unit: Learning and Performance Solutions

Evaluand: New Employee Onboarding (NEO)

Note: This example is a case study presented as part of the OPWL 530 Evaluation course. While the evaluation process was real, the business and program are fictitious.

Background

Tamrack, Inc. is a cleaning supply company founded in 2018 and headquartered in Boise, Idaho, with over 200 employees. Tamrack's client base is hospitals and nursing homes. Due to this, regulatory compliance and chemical handling are critical to employee preparedness. The company maintains a geographically dispersed workforce with branches across five states in the United States. In 2020, driven by the COVID-19 pandemic, Tamrack transitioned its New Employee Onboarding (NEO) program to an entirely online format. It retained the virtual delivery afterward because it reduced costs and supported the company's growing remote workforce.

The Learning and Performance Solutions (LPS) department is currently responsible for the maintenance and deployment of the NEO program. This company-wide initiative is designed to provide a consistent introduction to the organization, with the ultimate goal of improving employee satisfaction, enhancing work-life balance, and maximizing talent acquisition while reducing attrition. The program consists of a synchronous virtual session on the first day, followed by self-paced online modules within the company's Learning Management System (LMS).

Request

After speaking with Ms. Gibson, our client, my team discovered the primary intent is to assess areas for improvement. The intended users plan to use the findings to revise the program. We decided to conduct a goal-based formative evaluation of the NEO program to identify areas for improvement and achieve more positive outcomes. We followed Chyung's (2019) 10-step evaluation process.

Program

The NEO program serves approximately 30 new hires annually across all roles. The program begins with a synchronous first-day meeting where representatives from various business units present and answer questions. New hires then complete the remainder of the week asynchronously through the company's LMS at their own pace. During the first week, each new hire is paired with a mentor and encouraged to schedule a meet-and-greet. The evaluation scope was limited to this structured first-week experience.

Stakeholders

-

LPS Director (client)

HR Director

Instructional Designers

CEO

IT representatives

HR representatives

-

New employees

-

Direct supervisors/managers of new hires

NEO Mentors

Current employees

Customers

Program Logic Model and Dimensions

After an extant data review of the NEO program, my team developed a Program Logic Model (PLM) that mapped the NEO program's resources, activities, outputs, outcomes, and impact. Using the PLM, we identified two dimensions critical to the desired goals of the program: role readiness and feeling welcomed.

We prioritized the Activities and Outcomes categories of the PLM for review, as formative evaluations focus on ways to improve the quality of items listed in the resources, activities, and outputs categories (Chyung, 2019). Both dimensions were assigned equal importance weighting based on the client's input:

Employee Role Readiness (Critical)

PLM: Activities, Outcomes

Employee Feeling Welcomed (Critical)

PLM: Activities, Outcomes

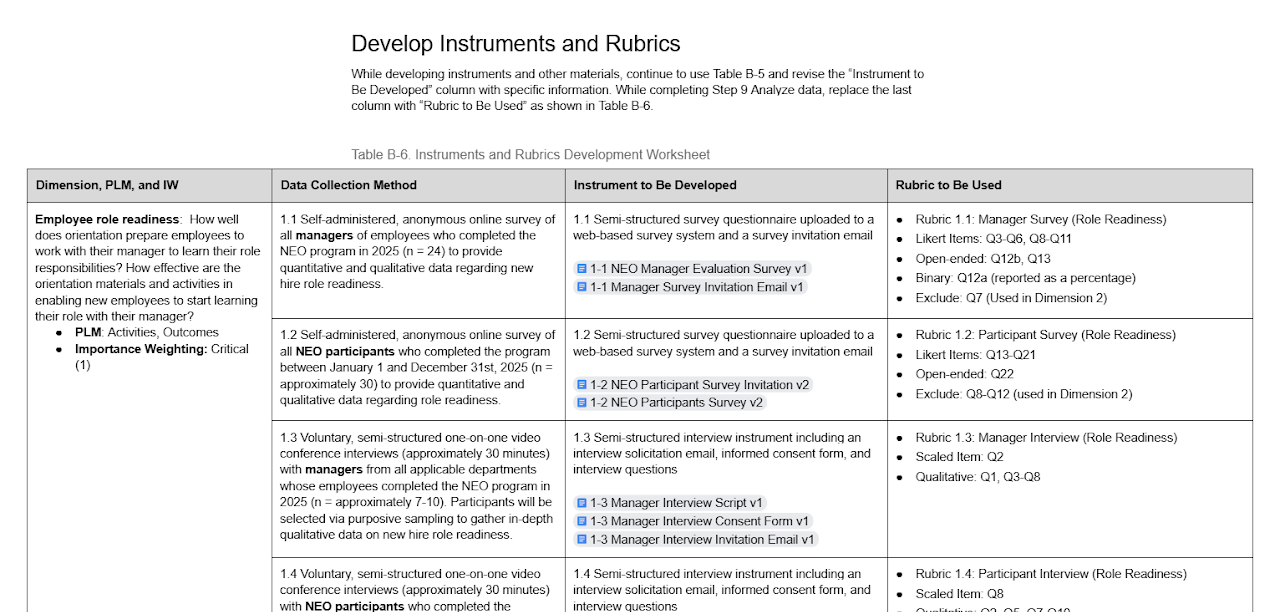

Instruments & Rubrics

For each dimension, the team created a set of complementary data collection instruments. These included semi-structured online surveys and one-on-one video conference interviews, targeting both managers and NEO participants. The surveys were designed to capture quantitative data through Likert-scale items alongside insights from open-ended questions, while the interviews used purposive sampling to gather richer qualitative perspectives.

Where possible, instruments were shared across dimensions (for example, the participant survey and participant interview had specific questions allocated to each dimension), which streamlined the data collection process and reduced respondent burden.

The rubrics established a four-level performance framework: Exceeds Expectations, Met Expectations, Improvement Needed, and Serious Problems Detected. These were applied consistently across all data sources. Quantitative items were scored using Likert-scale averages with defined numerical ranges for each performance level. At the same time, qualitative data from interviews were assessed by pairing scaled ratings with thematic analysis of participant comments.

The team also created dimensional triangulation rubrics that allowed synthesis across all data sources within each dimension. This triangulation approach ensured that no single data source could drive the overall judgment, strengthening the credibility and defensibility of the evaluation's conclusions.

Results

Findings draw on 27 NEO participants and 14 managers who completed surveys, along with 4 participants and 3 managers who participated in interviews.

Dimension 1: Employee Role Readiness

Rating: Meets Expectations

A consistent narrative emerged in all data sources that the NEO program lays a solid general foundation, but falls short of preparing new hires for the specifics of their roles. Survey scores from both managers and participants were generally strong (averaging in the 4.3–4.9 range for most items). However, the self-paced online training modules stood out as a clear weak point, earning the lowest score in the entire evaluation at 3.63, and was the only item to draw any disagreement responses.

Open-ended feedback explained why. Participant survey comments described the content as too dense, too general, and difficult to connect to their roles, while the interviews surfaced a stronger sense of being overwhelmed and time-pressured alongside the new workload. Managers echoed this, noting that department-specific systems, product knowledge, and process details aren't covered in NEO. This leaves the burden on the new employee's teams.

Interview data from both groups reinforced the pattern. Ratings were strong (4.33 for managers, 4.25 for participants), but qualitative comments consistently pointed to gaps in role-specific preparation, mentoring consistency, and applied learning opportunities. The manager survey rated "Exceeds Expectations" under the rubrics, while the participant survey, manager interview, and participant interview each rated "Met Expectations." Because the triangulation rubric required at least three of four sources at "Exceeds" for the dimension to be rated "Exceeds," the overall rating was determined to be "Meets Expectations."

Dimension 2: Employee Feeling Welcomed

Rating: Meets Expectations

The cultural onboarding story was notably stronger. Participant survey scores ranged from 4.74 to 4.89, with no negative responses recorded, placing the survey squarely at "Exceeds Expectations." Participant interviewees rated their experience at 4.25 and described positive early connections with their teams (introductions, check-ins, and team lunches were frequently mentioned as highlights).

However, some participants noted that the orientation felt somewhat impersonal and that connection beyond their immediate team was limited, which brought the interview data closer to "Met Expectations." The open-ended survey responses reinforced this, with the most common suggestion being more interaction with people rather than time spent alone at a computer. Under the dimensional triangulation rubric, with one source at "Exceeds" and one at "Met Expectations," the overall rating was determined to be "Meets Expectations."

Recommendations

Based on the evaluation findings, the NEO program should be maintained largely as designed, with targeted improvements in three priority areas.

1. Restructure the Self-Paced Learning Experience

The self-paced modules were the lowest-rated element of the program (3.63) and the only item to draw disagreement responses. Distributing the required modules across the first two to four weeks, introducing role-specific pathways, and replacing portions of the existing content with short, scenario-based exercises would address the volume, relevance, and pacing issues that participants and managers consistently described.

2. Standardize Early Mentor Touchpoints

While manager engagement was nearly universal in the first week, only 77.8% of new hires reported meeting with their assigned mentor. Establishing a small set of defined mentor touchpoints during the first two weeks, with simple guidance on what to cover, would reduce variability without significantly increasing burden on mentors.

3. Expand Opportunities for Connection

Although team-level welcome was a clear strength, participants described the orientation itself as impersonal and noted limited connection to the broader organization. Cross-team introductions, a peer cohort for new hires starting in the same month, and additional visibility from senior leaders beyond the Day 1 CEO presentation would build on an existing strength and help new hires transition from feeling welcomed to feeling integrated into Tamrack.

Limitations

Several factors should be considered when interpreting these findings. The sample sizes were small, and the evaluation captured a single point in time with the 2025 cohort, so year-over-year trends and differences between departments cannot be reliably drawn from this data.

The evaluation also relied on a single upstream stakeholder, the LPS Director, as the primary source of program context, since the HR Director was unavailable throughout the evaluation period. The LPS Director additionally conducted all semi-structured interviews on behalf of the evaluation team. Although anonymity was emphasized, respondents may have moderated their feedback given the interviewer's authority over the program.

The consistency between interview and survey findings partially mitigates this risk.

Note: Some of these constraints were due to the evaluation being part of the course's case study. Some practices (such as the LPS Director conducting interviews) would not be a practice used by the evaluator in an actual scenario.

References

American Evaluation Association. (n.d.). Guiding principles for evaluators. https://www.eval.org

Chyung, S. Y. (2019). 10-step evaluation for training and performance improvement. SAGE Publications, Incorporated.